You've already forked json-iterator

mirror of

https://github.com/json-iterator/go.git

synced 2025-07-09 23:45:32 +02:00

Compare commits

91 Commits

jsoniter-g

...

revert-55-

| Author | SHA1 | Date | |

|---|---|---|---|

| 787edc95b0 | |||

| 6e5817b773 | |||

| 7480e41836 | |||

| 9215b3c508 | |||

| 64e500f3c8 | |||

| 3307ce3ba2 | |||

| 6f50f15678 | |||

| cee09816e3 | |||

| cdbad22d22 | |||

| b0c9f047e2 | |||

| 6bd13c2948 | |||

| 84ad508437 | |||

| 4f909776cf | |||

| 962c470806 | |||

| 46d443fbad | |||

| 2608d40f2a | |||

| 3cf822853f | |||

| 26708bccc9 | |||

| d75b539bad | |||

| cfffa29c8a | |||

| 925df245d3 | |||

| 962a8cd303 | |||

| 6509ba05df | |||

| 579dbf3c1d | |||

| aa5181db67 | |||

| 67be6df2b1 | |||

| 0f5379494a | |||

| d09e2419ba | |||

| e1a71f6ba1 | |||

| dcb78991c4 | |||

| 9e8238cdc6 | |||

| a4e5abf492 | |||

| 3979955e69 | |||

| 5fd09f0e02 | |||

| af4982b22c | |||

| 29dc1d407d | |||

| 5b27aaa62c | |||

| 106636a191 | |||

| f50c4cfbbe | |||

| 87149ae489 | |||

| c0a4ad72e1 | |||

| 404c0ee44b | |||

| 10c1506f87 | |||

| 9a43fe6468 | |||

| 95e03f2937 | |||

| 4406ed9e62 | |||

| ff027701f5 | |||

| c69b61f879 | |||

| d97f5db769 | |||

| 45bbb40a9f | |||

| e36f926072 | |||

| 59e71bacc8 | |||

| 5cb0d35610 | |||

| 69b742e73a | |||

| a7f992f0e1 | |||

| 4cc44e7380 | |||

| 5310d4aa9a | |||

| 2051e3b8ae | |||

| fe9fa8900e | |||

| ad3a7fde32 | |||

| 377b892102 | |||

| 707ed3b091 | |||

| a7a7c7879a | |||

| f20f74519d | |||

| 7d2ae80c37 | |||

| f6f159e108 | |||

| e5a1e704ad | |||

| 7d5f90261e | |||

| 6126a6d3ca | |||

| 5fbe4e387d | |||

| fc44cb2d91 | |||

| 7e046e6aa7 | |||

| 5488fde97f | |||

| 53f8d370b5 | |||

| 3f1fcaff87 | |||

| 1df353727b | |||

| b893a0359d | |||

| a92111261c | |||

| 91b9e828b7 | |||

| 6bd835aeb1 | |||

| 90888390bc | |||

| ccb972f58c | |||

| 8711c74c85 | |||

| abcf2759ed | |||

| e5476f70e7 | |||

| b986d86f26 | |||

| 9a138f8b6a | |||

| d1aa59e34e | |||

| ceb8c8a733 | |||

| 62028f1ede | |||

| 696f962eda |

10

.idea/libraries/Go_SDK.xml

generated

Normal file

10

.idea/libraries/Go_SDK.xml

generated

Normal file

@ -0,0 +1,10 @@

|

||||

<component name="libraryTable">

|

||||

<library name="Go SDK">

|

||||

<CLASSES>

|

||||

<root url="file:///usr/local/go/src" />

|

||||

</CLASSES>

|

||||

<SOURCES>

|

||||

<root url="file:///usr/local/go/src" />

|

||||

</SOURCES>

|

||||

</library>

|

||||

</component>

|

||||

66

README.md

66

README.md

@ -2,61 +2,55 @@

|

||||

|

||||

jsoniter (json-iterator) is fast and flexible JSON parser available in [Java](https://github.com/json-iterator/java) and [Go](https://github.com/json-iterator/go)

|

||||

|

||||

# Why jsoniter?

|

||||

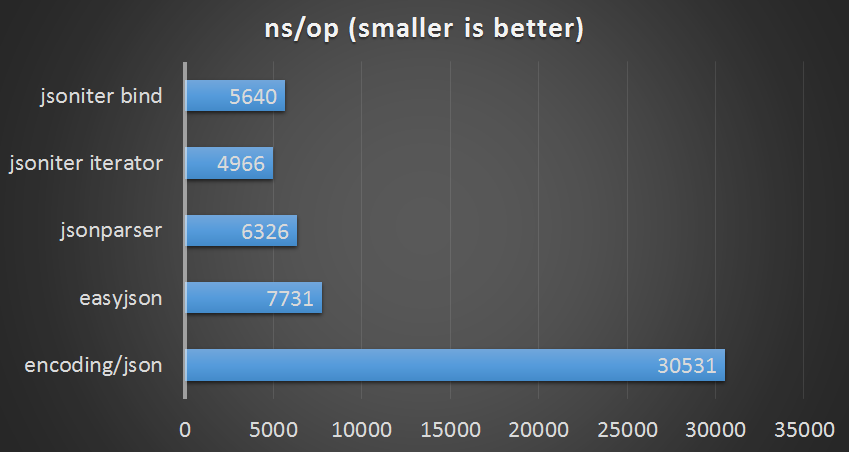

# Benchmark

|

||||

|

||||

* Jsoniter is the fastest JSON parser. It could be up to 10x faster than normal parser, data binding included. Shameless self [benchmark](http://jsoniter.com/benchmark.html)

|

||||

* Extremely flexible api. You can mix and match three different styles: bind-api, any-api or iterator-api. Checkout your [api choices](http://jsoniter.com/api.html)

|

||||

* Unique iterator api can iterate through JSON directly, zero memory allocation! See how [iterator](http://jsoniter.com/api.html#iterator-api) works

|

||||

|

||||

|

||||

# Show off

|

||||

Source code: https://github.com/json-iterator/go-benchmark/blob/master/src/github.com/json-iterator/go-benchmark/benchmark_medium_payload_test.go

|

||||

|

||||

Here is a quick show off, for more complete report you can checkout the full [benchmark](http://jsoniter.com/benchmark.html) with [in-depth optimization](http://jsoniter.com/benchmark.html#optimization-used) to back the numbers up

|

||||

Raw Result (easyjson requires static code generation)

|

||||

|

||||

|

||||

| | ns/op | allocation bytes | allocation times |

|

||||

| --- | --- | --- | --- |

|

||||

| std decode | 35510 ns/op | 1960 B/op | 99 allocs/op |

|

||||

| easyjson decode | 8499 ns/op | 160 B/op | 4 allocs/op |

|

||||

| jsoniter decode | 5623 ns/op | 160 B/op | 3 allocs/op |

|

||||

| std encode | 2213 ns/op | 712 B/op | 5 allocs/op |

|

||||

| easyjson encode | 883 ns/op | 576 B/op | 3 allocs/op |

|

||||

| jsoniter encode | 837 ns/op | 384 B/op | 4 allocs/op |

|

||||

|

||||

# Bind-API is the best

|

||||

# Usage

|

||||

|

||||

Bind-api should always be the first choice. Given this JSON document `[0,1,2,3]`

|

||||

100% compatibility with standard lib

|

||||

|

||||

Parse with Go bind-api

|

||||

Replace

|

||||

|

||||

```go

|

||||

import "encoding/json"

|

||||

json.Marshal(&data)

|

||||

```

|

||||

|

||||

with

|

||||

|

||||

```go

|

||||

import "github.com/json-iterator/go"

|

||||

iter := jsoniter.ParseString(`[0,1,2,3]`)

|

||||

var := iter.Read()

|

||||

fmt.Println(val)

|

||||

jsoniter.Marshal(&data)

|

||||

```

|

||||

|

||||

# Iterator-API for quick extraction

|

||||

Replace

|

||||

|

||||

When you do not need to get all the data back, just extract some.

|

||||

```go

|

||||

import "encoding/json"

|

||||

json.Unmarshal(input, &data)

|

||||

```

|

||||

|

||||

Parse with Go iterator-api

|

||||

with

|

||||

|

||||

```go

|

||||

import "github.com/json-iterator/go"

|

||||

iter := ParseString(`[0, [1, 2], [3, 4], 5]`)

|

||||

count := 0

|

||||

for iter.ReadArray() {

|

||||

iter.Skip()

|

||||

count++

|

||||

}

|

||||

fmt.Println(count) // 4

|

||||

jsoniter.Unmarshal(input, &data)

|

||||

```

|

||||

|

||||

# Any-API for maximum flexibility

|

||||

|

||||

Parse with Go any-api

|

||||

|

||||

```go

|

||||

import "github.com/json-iterator/go"

|

||||

iter := jsoniter.ParseString(`[{"field1":"11","field2":"12"},{"field1":"21","field2":"22"}]`)

|

||||

val := iter.ReadAny()

|

||||

fmt.Println(val.ToInt(1, "field2")) // 22

|

||||

```

|

||||

|

||||

Notice you can extract from nested data structure, and convert any type to the type to you want.

|

||||

|

||||

# How to get

|

||||

|

||||

```

|

||||

|

||||

@ -1014,4 +1014,4 @@ func typeAndKind(v interface{}) (reflect.Type, reflect.Kind) {

|

||||

k = t.Kind()

|

||||

}

|

||||

return t, k

|

||||

}

|

||||

}

|

||||

|

||||

47

example_test.go

Normal file

47

example_test.go

Normal file

@ -0,0 +1,47 @@

|

||||

package jsoniter_test

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"os"

|

||||

|

||||

"github.com/json-iterator/go"

|

||||

)

|

||||

|

||||

func ExampleMarshal() {

|

||||

type ColorGroup struct {

|

||||

ID int

|

||||

Name string

|

||||

Colors []string

|

||||

}

|

||||

group := ColorGroup{

|

||||

ID: 1,

|

||||

Name: "Reds",

|

||||

Colors: []string{"Crimson", "Red", "Ruby", "Maroon"},

|

||||

}

|

||||

b, err := jsoniter.Marshal(group)

|

||||

if err != nil {

|

||||

fmt.Println("error:", err)

|

||||

}

|

||||

os.Stdout.Write(b)

|

||||

// Output:

|

||||

// {"ID":1,"Name":"Reds","Colors":["Crimson","Red","Ruby","Maroon"]}

|

||||

}

|

||||

|

||||

func ExampleUnmarshal() {

|

||||

var jsonBlob = []byte(`[

|

||||

{"Name": "Platypus", "Order": "Monotremata"},

|

||||

{"Name": "Quoll", "Order": "Dasyuromorphia"}

|

||||

]`)

|

||||

type Animal struct {

|

||||

Name string

|

||||

Order string

|

||||

}

|

||||

var animals []Animal

|

||||

err := jsoniter.Unmarshal(jsonBlob, &animals)

|

||||

if err != nil {

|

||||

fmt.Println("error:", err)

|

||||

}

|

||||

fmt.Printf("%+v", animals)

|

||||

// Output:

|

||||

// [{Name:Platypus Order:Monotremata} {Name:Quoll Order:Dasyuromorphia}]

|

||||

}

|

||||

@ -1,13 +1,37 @@

|

||||

// Package jsoniter implements encoding and decoding of JSON as defined in

|

||||

// RFC 4627 and provides interfaces with identical syntax of standard lib encoding/json.

|

||||

// Converting from encoding/json to jsoniter is no more than replacing the package with jsoniter

|

||||

// and variable type declarations (if any).

|

||||

// jsoniter interfaces gives 100% compatibility with code using standard lib.

|

||||

//

|

||||

// "JSON and Go"

|

||||

// (https://golang.org/doc/articles/json_and_go.html)

|

||||

// gives a description of how Marshal/Unmarshal operate

|

||||

// between arbitrary or predefined json objects and bytes,

|

||||

// and it applies to jsoniter.Marshal/Unmarshal as well.

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"io"

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"io"

|

||||

"reflect"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

// Unmarshal adapts to json/encoding APIs

|

||||

// Unmarshal adapts to json/encoding Unmarshal API

|

||||

//

|

||||

// Unmarshal parses the JSON-encoded data and stores the result in the value pointed to by v.

|

||||

// Refer to https://godoc.org/encoding/json#Unmarshal for more information

|

||||

func Unmarshal(data []byte, v interface{}) error {

|

||||

data = data[:lastNotSpacePos(data)]

|

||||

iter := ParseBytes(data)

|

||||

typ := reflect.TypeOf(v)

|

||||

if typ.Kind() != reflect.Ptr {

|

||||

// return non-pointer error

|

||||

return errors.New("the second param must be ptr type")

|

||||

}

|

||||

iter.ReadVal(v)

|

||||

if iter.head == iter.tail {

|

||||

iter.loadMore()

|

||||

@ -21,7 +45,9 @@ func Unmarshal(data []byte, v interface{}) error {

|

||||

return iter.Error

|

||||

}

|

||||

|

||||

// UnmarshalAny adapts to

|

||||

func UnmarshalAny(data []byte) (Any, error) {

|

||||

data = data[:lastNotSpacePos(data)]

|

||||

iter := ParseBytes(data)

|

||||

any := iter.ReadAny()

|

||||

if iter.head == iter.tail {

|

||||

@ -36,8 +62,18 @@ func UnmarshalAny(data []byte) (Any, error) {

|

||||

return any, iter.Error

|

||||

}

|

||||

|

||||

func lastNotSpacePos(data []byte) int {

|

||||

for i := len(data) - 1; i >= 0; i-- {

|

||||

if data[i] != ' ' && data[i] != '\t' && data[i] != '\r' && data[i] != '\n' {

|

||||

return i + 1

|

||||

}

|

||||

}

|

||||

return 0

|

||||

}

|

||||

|

||||

func UnmarshalFromString(str string, v interface{}) error {

|

||||

data := []byte(str)

|

||||

data = data[:lastNotSpacePos(data)]

|

||||

iter := ParseBytes(data)

|

||||

iter.ReadVal(v)

|

||||

if iter.head == iter.tail {

|

||||

@ -54,6 +90,7 @@ func UnmarshalFromString(str string, v interface{}) error {

|

||||

|

||||

func UnmarshalAnyFromString(str string) (Any, error) {

|

||||

data := []byte(str)

|

||||

data = data[:lastNotSpacePos(data)]

|

||||

iter := ParseBytes(data)

|

||||

any := iter.ReadAny()

|

||||

if iter.head == iter.tail {

|

||||

@ -68,15 +105,17 @@ func UnmarshalAnyFromString(str string) (Any, error) {

|

||||

return nil, iter.Error

|

||||

}

|

||||

|

||||

// Marshal adapts to json/encoding Marshal API

|

||||

//

|

||||

// Marshal returns the JSON encoding of v, adapts to json/encoding Marshal API

|

||||

// Refer to https://godoc.org/encoding/json#Marshal for more information

|

||||

func Marshal(v interface{}) ([]byte, error) {

|

||||

buf := &bytes.Buffer{}

|

||||

stream := NewStream(buf, 4096)

|

||||

stream := NewStream(nil, 256)

|

||||

stream.WriteVal(v)

|

||||

stream.Flush()

|

||||

if stream.Error != nil {

|

||||

return nil, stream.Error

|

||||

}

|

||||

return buf.Bytes(), nil

|

||||

return stream.Buffer(), nil

|

||||

}

|

||||

|

||||

func MarshalToString(v interface{}) (string, error) {

|

||||

@ -85,4 +124,68 @@ func MarshalToString(v interface{}) (string, error) {

|

||||

return "", err

|

||||

}

|

||||

return string(buf), nil

|

||||

}

|

||||

}

|

||||

|

||||

// NewDecoder adapts to json/stream NewDecoder API.

|

||||

//

|

||||

// NewDecoder returns a new decoder that reads from r.

|

||||

//

|

||||

// Instead of a json/encoding Decoder, an AdaptedDecoder is returned

|

||||

// Refer to https://godoc.org/encoding/json#NewDecoder for more information

|

||||

func NewDecoder(reader io.Reader) *AdaptedDecoder {

|

||||

iter := Parse(reader, 512)

|

||||

return &AdaptedDecoder{iter}

|

||||

}

|

||||

|

||||

// AdaptedDecoder reads and decodes JSON values from an input stream.

|

||||

// AdaptedDecoder provides identical APIs with json/stream Decoder (Token() and UseNumber() are in progress)

|

||||

type AdaptedDecoder struct {

|

||||

iter *Iterator

|

||||

}

|

||||

|

||||

func (adapter *AdaptedDecoder) Decode(obj interface{}) error {

|

||||

adapter.iter.ReadVal(obj)

|

||||

err := adapter.iter.Error

|

||||

if err == io.EOF {

|

||||

return nil

|

||||

}

|

||||

return adapter.iter.Error

|

||||

}

|

||||

|

||||

func (adapter *AdaptedDecoder) More() bool {

|

||||

return adapter.iter.head != adapter.iter.tail

|

||||

}

|

||||

|

||||

func (adapter *AdaptedDecoder) Buffered() io.Reader {

|

||||

remaining := adapter.iter.buf[adapter.iter.head:adapter.iter.tail]

|

||||

return bytes.NewReader(remaining)

|

||||

}

|

||||

|

||||

func (decoder *AdaptedDecoder) UseNumber() {

|

||||

RegisterTypeDecoder("interface {}", func(ptr unsafe.Pointer, iter *Iterator) {

|

||||

if iter.WhatIsNext() == Number {

|

||||

*((*interface{})(ptr)) = json.Number(iter.readNumberAsString())

|

||||

} else {

|

||||

*((*interface{})(ptr)) = iter.Read()

|

||||

}

|

||||

})

|

||||

}

|

||||

|

||||

func NewEncoder(writer io.Writer) *AdaptedEncoder {

|

||||

stream := NewStream(writer, 512)

|

||||

return &AdaptedEncoder{stream}

|

||||

}

|

||||

|

||||

type AdaptedEncoder struct {

|

||||

stream *Stream

|

||||

}

|

||||

|

||||

func (adapter *AdaptedEncoder) Encode(val interface{}) error {

|

||||

adapter.stream.WriteVal(val)

|

||||

adapter.stream.Flush()

|

||||

return adapter.stream.Error

|

||||

}

|

||||

|

||||

func (adapter *AdaptedEncoder) SetIndent(prefix, indent string) {

|

||||

adapter.stream.IndentionStep = len(indent)

|

||||

}

|

||||

|

||||

@ -98,6 +98,10 @@ func Wrap(val interface{}) Any {

|

||||

if val == nil {

|

||||

return &nilAny{}

|

||||

}

|

||||

asAny, isAny := val.(Any)

|

||||

if isAny {

|

||||

return asAny

|

||||

}

|

||||

type_ := reflect.TypeOf(val)

|

||||

switch type_.Kind() {

|

||||

case reflect.Slice:

|

||||

|

||||

@ -1,9 +1,9 @@

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"unsafe"

|

||||

"fmt"

|

||||

"reflect"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

type arrayLazyAny struct {

|

||||

@ -44,7 +44,7 @@ func (any *arrayLazyAny) fillCacheUntil(target int) Any {

|

||||

return any.cache[target]

|

||||

}

|

||||

iter := any.Parse()

|

||||

if (len(any.remaining) == len(any.buf)) {

|

||||

if len(any.remaining) == len(any.buf) {

|

||||

iter.head++

|

||||

c := iter.nextToken()

|

||||

if c != ']' {

|

||||

@ -337,9 +337,9 @@ func (any *arrayLazyAny) GetInterface() interface{} {

|

||||

|

||||

type arrayAny struct {

|

||||

baseAny

|

||||

err error

|

||||

cache []Any

|

||||

val reflect.Value

|

||||

err error

|

||||

cache []Any

|

||||

val reflect.Value

|

||||

}

|

||||

|

||||

func wrapArray(val interface{}) *arrayAny {

|

||||

@ -536,4 +536,4 @@ func (any *arrayAny) WriteTo(stream *Stream) {

|

||||

func (any *arrayAny) GetInterface() interface{} {

|

||||

any.fillCache()

|

||||

return any.cache

|

||||

}

|

||||

}

|

||||

|

||||

@ -2,15 +2,15 @@ package jsoniter

|

||||

|

||||

import (

|

||||

"io"

|

||||

"unsafe"

|

||||

"strconv"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

type float64LazyAny struct {

|

||||

baseAny

|

||||

buf []byte

|

||||

iter *Iterator

|

||||

err error

|

||||

buf []byte

|

||||

iter *Iterator

|

||||

err error

|

||||

cache float64

|

||||

}

|

||||

|

||||

@ -163,4 +163,4 @@ func (any *floatAny) WriteTo(stream *Stream) {

|

||||

|

||||

func (any *floatAny) GetInterface() interface{} {

|

||||

return any.val

|

||||

}

|

||||

}

|

||||

|

||||

@ -67,4 +67,4 @@ func (any *int32Any) Parse() *Iterator {

|

||||

|

||||

func (any *int32Any) GetInterface() interface{} {

|

||||

return any.val

|

||||

}

|

||||

}

|

||||

|

||||

@ -2,8 +2,8 @@ package jsoniter

|

||||

|

||||

import (

|

||||

"io"

|

||||

"unsafe"

|

||||

"strconv"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

type int64LazyAny struct {

|

||||

@ -163,4 +163,4 @@ func (any *int64Any) Parse() *Iterator {

|

||||

|

||||

func (any *int64Any) GetInterface() interface{} {

|

||||

return any.val

|

||||

}

|

||||

}

|

||||

|

||||

@ -62,4 +62,4 @@ func (any *nilAny) Parse() *Iterator {

|

||||

|

||||

func (any *nilAny) GetInterface() interface{} {

|

||||

return nil

|

||||

}

|

||||

}

|

||||

|

||||

@ -1,9 +1,9 @@

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"unsafe"

|

||||

"fmt"

|

||||

"reflect"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

type objectLazyAny struct {

|

||||

@ -322,6 +322,7 @@ func (any *objectLazyAny) IterateObject() (func() (string, Any, bool), bool) {

|

||||

any.err = iter.Error

|

||||

return key, value, true

|

||||

} else {

|

||||

nextKey = ""

|

||||

remaining = nil

|

||||

any.remaining = nil

|

||||

any.err = iter.Error

|

||||

|

||||

@ -5,7 +5,7 @@ import (

|

||||

"strconv"

|

||||

)

|

||||

|

||||

type stringLazyAny struct{

|

||||

type stringLazyAny struct {

|

||||

baseAny

|

||||

buf []byte

|

||||

iter *Iterator

|

||||

@ -136,9 +136,9 @@ func (any *stringLazyAny) GetInterface() interface{} {

|

||||

return any.cache

|

||||

}

|

||||

|

||||

type stringAny struct{

|

||||

type stringAny struct {

|

||||

baseAny

|

||||

err error

|

||||

err error

|

||||

val string

|

||||

}

|

||||

|

||||

@ -146,7 +146,6 @@ func (any *stringAny) Parse() *Iterator {

|

||||

return nil

|

||||

}

|

||||

|

||||

|

||||

func (any *stringAny) ValueType() ValueType {

|

||||

return String

|

||||

}

|

||||

@ -228,4 +227,4 @@ func (any *stringAny) WriteTo(stream *Stream) {

|

||||

|

||||

func (any *stringAny) GetInterface() interface{} {

|

||||

return any.val

|

||||

}

|

||||

}

|

||||

|

||||

@ -1,12 +1,11 @@

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"io"

|

||||

"strconv"

|

||||

"unsafe"

|

||||

"io"

|

||||

)

|

||||

|

||||

|

||||

type uint64LazyAny struct {

|

||||

baseAny

|

||||

buf []byte

|

||||

@ -164,4 +163,4 @@ func (any *uint64Any) Parse() *Iterator {

|

||||

|

||||

func (any *uint64Any) GetInterface() interface{} {

|

||||

return any.val

|

||||

}

|

||||

}

|

||||

|

||||

@ -1,3 +1,9 @@

|

||||

//

|

||||

// Besides, jsoniter.Iterator provides a different set of interfaces

|

||||

// iterating given bytes/string/reader

|

||||

// and yielding parsed elements one by one.

|

||||

// This set of interfaces reads input as required and gives

|

||||

// better performance.

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

@ -276,4 +282,3 @@ func (iter *Iterator) ReadBase64() (ret []byte) {

|

||||

}

|

||||

return ret[:n]

|

||||

}

|

||||

|

||||

|

||||

@ -18,12 +18,11 @@ func (iter *Iterator) ReadArray() (ret bool) {

|

||||

case ',':

|

||||

return true

|

||||

default:

|

||||

iter.reportError("ReadArray", "expect [ or , or ] or n, but found: " + string([]byte{c}))

|

||||

iter.reportError("ReadArray", "expect [ or , or ] or n, but found: "+string([]byte{c}))

|

||||

return

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

func (iter *Iterator) ReadArrayCB(callback func(*Iterator) bool) (ret bool) {

|

||||

c := iter.nextToken()

|

||||

if c == '[' {

|

||||

@ -46,6 +45,6 @@ func (iter *Iterator) ReadArrayCB(callback func(*Iterator) bool) (ret bool) {

|

||||

iter.skipFixedBytes(3)

|

||||

return true // null

|

||||

}

|

||||

iter.reportError("ReadArrayCB", "expect [ or n, but found: " + string([]byte{c}))

|

||||

iter.reportError("ReadArrayCB", "expect [ or n, but found: "+string([]byte{c}))

|

||||

return false

|

||||

}

|

||||

}

|

||||

|

||||

@ -2,11 +2,13 @@ package jsoniter

|

||||

|

||||

import (

|

||||

"io"

|

||||

"math/big"

|

||||

"strconv"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

var floatDigits []int8

|

||||

|

||||

const invalidCharForNumber = int8(-1)

|

||||

const endOfNumber = int8(-2)

|

||||

const dotInNumber = int8(-3)

|

||||

@ -19,11 +21,45 @@ func init() {

|

||||

for i := int8('0'); i <= int8('9'); i++ {

|

||||

floatDigits[i] = i - int8('0')

|

||||

}

|

||||

floatDigits[','] = endOfNumber;

|

||||

floatDigits[']'] = endOfNumber;

|

||||

floatDigits['}'] = endOfNumber;

|

||||

floatDigits[' '] = endOfNumber;

|

||||

floatDigits['.'] = dotInNumber;

|

||||

floatDigits[','] = endOfNumber

|

||||

floatDigits[']'] = endOfNumber

|

||||

floatDigits['}'] = endOfNumber

|

||||

floatDigits[' '] = endOfNumber

|

||||

floatDigits['\t'] = endOfNumber

|

||||

floatDigits['\n'] = endOfNumber

|

||||

floatDigits['.'] = dotInNumber

|

||||

}

|

||||

|

||||

func (iter *Iterator) ReadBigFloat() (ret *big.Float) {

|

||||

str := iter.readNumberAsString()

|

||||

if iter.Error != nil && iter.Error != io.EOF {

|

||||

return nil

|

||||

}

|

||||

prec := 64

|

||||

if len(str) > prec {

|

||||

prec = len(str)

|

||||

}

|

||||

val, _, err := big.ParseFloat(str, 10, uint(prec), big.ToZero)

|

||||

if err != nil {

|

||||

iter.Error = err

|

||||

return nil

|

||||

}

|

||||

return val

|

||||

}

|

||||

|

||||

func (iter *Iterator) ReadBigInt() (ret *big.Int) {

|

||||

str := iter.readNumberAsString()

|

||||

if iter.Error != nil && iter.Error != io.EOF {

|

||||

return nil

|

||||

}

|

||||

ret = big.NewInt(0)

|

||||

var success bool

|

||||

ret, success = ret.SetString(str, 10)

|

||||

if !success {

|

||||

iter.reportError("ReadBigInt", "invalid big int")

|

||||

return nil

|

||||

}

|

||||

return ret

|

||||

}

|

||||

|

||||

func (iter *Iterator) ReadFloat32() (ret float32) {

|

||||

@ -40,7 +76,7 @@ func (iter *Iterator) readPositiveFloat32() (ret float32) {

|

||||

value := uint64(0)

|

||||

c := byte(' ')

|

||||

i := iter.head

|

||||

non_decimal_loop:

|

||||

non_decimal_loop:

|

||||

for ; i < iter.tail; i++ {

|

||||

c = iter.buf[i]

|

||||

ind := floatDigits[c]

|

||||

@ -56,14 +92,14 @@ func (iter *Iterator) readPositiveFloat32() (ret float32) {

|

||||

if value > uint64SafeToMultiple10 {

|

||||

return iter.readFloat32SlowPath()

|

||||

}

|

||||

value = (value << 3) + (value << 1) + uint64(ind); // value = value * 10 + ind;

|

||||

value = (value << 3) + (value << 1) + uint64(ind) // value = value * 10 + ind;

|

||||

}

|

||||

if c == '.' {

|

||||

i++

|

||||

decimalPlaces := 0;

|

||||

decimalPlaces := 0

|

||||

for ; i < iter.tail; i++ {

|

||||

c = iter.buf[i]

|

||||

ind := floatDigits[c];

|

||||

ind := floatDigits[c]

|

||||

switch ind {

|

||||

case endOfNumber:

|

||||

if decimalPlaces > 0 && decimalPlaces < len(POW10) {

|

||||

@ -71,7 +107,7 @@ func (iter *Iterator) readPositiveFloat32() (ret float32) {

|

||||

return float32(float64(value) / float64(POW10[decimalPlaces]))

|

||||

}

|

||||

// too many decimal places

|

||||

return iter.readFloat32SlowPath()

|

||||

return iter.readFloat32SlowPath()

|

||||

case invalidCharForNumber:

|

||||

fallthrough

|

||||

case dotInNumber:

|

||||

@ -87,10 +123,10 @@ func (iter *Iterator) readPositiveFloat32() (ret float32) {

|

||||

return iter.readFloat32SlowPath()

|

||||

}

|

||||

|

||||

func (iter *Iterator) readFloat32SlowPath() (ret float32) {

|

||||

func (iter *Iterator) readNumberAsString() (ret string) {

|

||||

strBuf := [16]byte{}

|

||||

str := strBuf[0:0]

|

||||

load_loop:

|

||||

load_loop:

|

||||

for {

|

||||

for i := iter.head; i < iter.tail; i++ {

|

||||

c := iter.buf[i]

|

||||

@ -99,6 +135,7 @@ func (iter *Iterator) readFloat32SlowPath() (ret float32) {

|

||||

str = append(str, c)

|

||||

continue

|

||||

default:

|

||||

iter.head = i

|

||||

break load_loop

|

||||

}

|

||||

}

|

||||

@ -109,7 +146,18 @@ func (iter *Iterator) readFloat32SlowPath() (ret float32) {

|

||||

if iter.Error != nil && iter.Error != io.EOF {

|

||||

return

|

||||

}

|

||||

val, err := strconv.ParseFloat(*(*string)(unsafe.Pointer(&str)), 32)

|

||||

if len(str) == 0 {

|

||||

iter.reportError("readNumberAsString", "invalid number")

|

||||

}

|

||||

return *(*string)(unsafe.Pointer(&str))

|

||||

}

|

||||

|

||||

func (iter *Iterator) readFloat32SlowPath() (ret float32) {

|

||||

str := iter.readNumberAsString()

|

||||

if iter.Error != nil && iter.Error != io.EOF {

|

||||

return

|

||||

}

|

||||

val, err := strconv.ParseFloat(str, 32)

|

||||

if err != nil {

|

||||

iter.Error = err

|

||||

return

|

||||

@ -131,7 +179,7 @@ func (iter *Iterator) readPositiveFloat64() (ret float64) {

|

||||

value := uint64(0)

|

||||

c := byte(' ')

|

||||

i := iter.head

|

||||

non_decimal_loop:

|

||||

non_decimal_loop:

|

||||

for ; i < iter.tail; i++ {

|

||||

c = iter.buf[i]

|

||||

ind := floatDigits[c]

|

||||

@ -147,14 +195,14 @@ func (iter *Iterator) readPositiveFloat64() (ret float64) {

|

||||

if value > uint64SafeToMultiple10 {

|

||||

return iter.readFloat64SlowPath()

|

||||

}

|

||||

value = (value << 3) + (value << 1) + uint64(ind); // value = value * 10 + ind;

|

||||

value = (value << 3) + (value << 1) + uint64(ind) // value = value * 10 + ind;

|

||||

}

|

||||

if c == '.' {

|

||||

i++

|

||||

decimalPlaces := 0;

|

||||

decimalPlaces := 0

|

||||

for ; i < iter.tail; i++ {

|

||||

c = iter.buf[i]

|

||||

ind := floatDigits[c];

|

||||

ind := floatDigits[c]

|

||||

switch ind {

|

||||

case endOfNumber:

|

||||

if decimalPlaces > 0 && decimalPlaces < len(POW10) {

|

||||

@ -179,28 +227,11 @@ func (iter *Iterator) readPositiveFloat64() (ret float64) {

|

||||

}

|

||||

|

||||

func (iter *Iterator) readFloat64SlowPath() (ret float64) {

|

||||

strBuf := [16]byte{}

|

||||

str := strBuf[0:0]

|

||||

load_loop:

|

||||

for {

|

||||

for i := iter.head; i < iter.tail; i++ {

|

||||

c := iter.buf[i]

|

||||

switch c {

|

||||

case '-', '.', 'e', 'E', '0', '1', '2', '3', '4', '5', '6', '7', '8', '9':

|

||||

str = append(str, c)

|

||||

continue

|

||||

default:

|

||||

break load_loop

|

||||

}

|

||||

}

|

||||

if !iter.loadMore() {

|

||||

break

|

||||

}

|

||||

}

|

||||

str := iter.readNumberAsString()

|

||||

if iter.Error != nil && iter.Error != io.EOF {

|

||||

return

|

||||

}

|

||||

val, err := strconv.ParseFloat(*(*string)(unsafe.Pointer(&str)), 64)

|

||||

val, err := strconv.ParseFloat(str, 64)

|

||||

if err != nil {

|

||||

iter.Error = err

|

||||

return

|

||||

|

||||

@ -6,8 +6,8 @@ import (

|

||||

|

||||

var intDigits []int8

|

||||

|

||||

const uint32SafeToMultiply10 = uint32(0xffffffff) / 10 - 1

|

||||

const uint64SafeToMultiple10 = uint64(0xffffffffffffffff) / 10 - 1

|

||||

const uint32SafeToMultiply10 = uint32(0xffffffff)/10 - 1

|

||||

const uint64SafeToMultiple10 = uint64(0xffffffffffffffff)/10 - 1

|

||||

const int64Max = uint64(0x7fffffffffffffff)

|

||||

const int32Max = uint32(0x7fffffff)

|

||||

const int16Max = uint32(0x7fff)

|

||||

@ -17,7 +17,7 @@ const uint8Max = uint32(0xffff)

|

||||

|

||||

func init() {

|

||||

intDigits = make([]int8, 256)

|

||||

for i := 0; i < len(floatDigits); i++ {

|

||||

for i := 0; i < len(intDigits); i++ {

|

||||

intDigits[i] = invalidCharForNumber

|

||||

}

|

||||

for i := int8('0'); i <= int8('9'); i++ {

|

||||

@ -37,15 +37,15 @@ func (iter *Iterator) ReadInt8() (ret int8) {

|

||||

c := iter.nextToken()

|

||||

if c == '-' {

|

||||

val := iter.readUint32(iter.readByte())

|

||||

if val > int8Max + 1 {

|

||||

iter.reportError("ReadInt8", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

if val > int8Max+1 {

|

||||

iter.reportError("ReadInt8", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return -int8(val)

|

||||

} else {

|

||||

val := iter.readUint32(c)

|

||||

if val > int8Max {

|

||||

iter.reportError("ReadInt8", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

iter.reportError("ReadInt8", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return int8(val)

|

||||

@ -55,7 +55,7 @@ func (iter *Iterator) ReadInt8() (ret int8) {

|

||||

func (iter *Iterator) ReadUint8() (ret uint8) {

|

||||

val := iter.readUint32(iter.nextToken())

|

||||

if val > uint8Max {

|

||||

iter.reportError("ReadUint8", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

iter.reportError("ReadUint8", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return uint8(val)

|

||||

@ -65,15 +65,15 @@ func (iter *Iterator) ReadInt16() (ret int16) {

|

||||

c := iter.nextToken()

|

||||

if c == '-' {

|

||||

val := iter.readUint32(iter.readByte())

|

||||

if val > int16Max + 1 {

|

||||

iter.reportError("ReadInt16", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

if val > int16Max+1 {

|

||||

iter.reportError("ReadInt16", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return -int16(val)

|

||||

} else {

|

||||

val := iter.readUint32(c)

|

||||

if val > int16Max {

|

||||

iter.reportError("ReadInt16", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

iter.reportError("ReadInt16", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return int16(val)

|

||||

@ -83,7 +83,7 @@ func (iter *Iterator) ReadInt16() (ret int16) {

|

||||

func (iter *Iterator) ReadUint16() (ret uint16) {

|

||||

val := iter.readUint32(iter.nextToken())

|

||||

if val > uint16Max {

|

||||

iter.reportError("ReadUint16", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

iter.reportError("ReadUint16", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return uint16(val)

|

||||

@ -93,15 +93,15 @@ func (iter *Iterator) ReadInt32() (ret int32) {

|

||||

c := iter.nextToken()

|

||||

if c == '-' {

|

||||

val := iter.readUint32(iter.readByte())

|

||||

if val > int32Max + 1 {

|

||||

iter.reportError("ReadInt32", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

if val > int32Max+1 {

|

||||

iter.reportError("ReadInt32", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return -int32(val)

|

||||

} else {

|

||||

val := iter.readUint32(c)

|

||||

if val > int32Max {

|

||||

iter.reportError("ReadInt32", "overflow: " + strconv.FormatInt(int64(val), 10))

|

||||

iter.reportError("ReadInt32", "overflow: "+strconv.FormatInt(int64(val), 10))

|

||||

return

|

||||

}

|

||||

return int32(val)

|

||||

@ -118,11 +118,11 @@ func (iter *Iterator) readUint32(c byte) (ret uint32) {

|

||||

return 0 // single zero

|

||||

}

|

||||

if ind == invalidCharForNumber {

|

||||

iter.reportError("readUint32", "unexpected character: " + string([]byte{byte(ind)}))

|

||||

iter.reportError("readUint32", "unexpected character: "+string([]byte{byte(ind)}))

|

||||

return

|

||||

}

|

||||

value := uint32(ind)

|

||||

if iter.tail - iter.head > 10 {

|

||||

if iter.tail-iter.head > 10 {

|

||||

i := iter.head

|

||||

ind2 := intDigits[iter.buf[i]]

|

||||

if ind2 == invalidCharForNumber {

|

||||

@ -133,7 +133,7 @@ func (iter *Iterator) readUint32(c byte) (ret uint32) {

|

||||

ind3 := intDigits[iter.buf[i]]

|

||||

if ind3 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 10 + uint32(ind2)

|

||||

return value*10 + uint32(ind2)

|

||||

}

|

||||

//iter.head = i + 1

|

||||

//value = value * 100 + uint32(ind2) * 10 + uint32(ind3)

|

||||

@ -141,35 +141,35 @@ func (iter *Iterator) readUint32(c byte) (ret uint32) {

|

||||

ind4 := intDigits[iter.buf[i]]

|

||||

if ind4 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 100 + uint32(ind2) * 10 + uint32(ind3)

|

||||

return value*100 + uint32(ind2)*10 + uint32(ind3)

|

||||

}

|

||||

i++

|

||||

ind5 := intDigits[iter.buf[i]]

|

||||

if ind5 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 1000 + uint32(ind2) * 100 + uint32(ind3) * 10 + uint32(ind4)

|

||||

return value*1000 + uint32(ind2)*100 + uint32(ind3)*10 + uint32(ind4)

|

||||

}

|

||||

i++

|

||||

ind6 := intDigits[iter.buf[i]]

|

||||

if ind6 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 10000 + uint32(ind2) * 1000 + uint32(ind3) * 100 + uint32(ind4) * 10 + uint32(ind5)

|

||||

return value*10000 + uint32(ind2)*1000 + uint32(ind3)*100 + uint32(ind4)*10 + uint32(ind5)

|

||||

}

|

||||

i++

|

||||

ind7 := intDigits[iter.buf[i]]

|

||||

if ind7 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 100000 + uint32(ind2) * 10000 + uint32(ind3) * 1000 + uint32(ind4) * 100 + uint32(ind5) * 10 + uint32(ind6)

|

||||

return value*100000 + uint32(ind2)*10000 + uint32(ind3)*1000 + uint32(ind4)*100 + uint32(ind5)*10 + uint32(ind6)

|

||||

}

|

||||

i++

|

||||

ind8 := intDigits[iter.buf[i]]

|

||||

if ind8 == invalidCharForNumber {

|

||||

iter.head = i

|

||||

return value * 1000000 + uint32(ind2) * 100000 + uint32(ind3) * 10000 + uint32(ind4) * 1000 + uint32(ind5) * 100 + uint32(ind6) * 10 + uint32(ind7)

|

||||

return value*1000000 + uint32(ind2)*100000 + uint32(ind3)*10000 + uint32(ind4)*1000 + uint32(ind5)*100 + uint32(ind6)*10 + uint32(ind7)

|

||||

}

|

||||

i++

|

||||

ind9 := intDigits[iter.buf[i]]

|

||||

value = value * 10000000 + uint32(ind2) * 1000000 + uint32(ind3) * 100000 + uint32(ind4) * 10000 + uint32(ind5) * 1000 + uint32(ind6) * 100 + uint32(ind7) * 10 + uint32(ind8)

|

||||

value = value*10000000 + uint32(ind2)*1000000 + uint32(ind3)*100000 + uint32(ind4)*10000 + uint32(ind5)*1000 + uint32(ind6)*100 + uint32(ind7)*10 + uint32(ind8)

|

||||

iter.head = i

|

||||

if ind9 == invalidCharForNumber {

|

||||

return value

|

||||

@ -194,7 +194,7 @@ func (iter *Iterator) readUint32(c byte) (ret uint32) {

|

||||

}

|

||||

value = (value << 3) + (value << 1) + uint32(ind)

|

||||

}

|

||||

if (!iter.loadMore()) {

|

||||

if !iter.loadMore() {

|

||||

return value

|

||||

}

|

||||

}

|

||||

@ -204,15 +204,15 @@ func (iter *Iterator) ReadInt64() (ret int64) {

|

||||

c := iter.nextToken()

|

||||

if c == '-' {

|

||||

val := iter.readUint64(iter.readByte())

|

||||

if val > int64Max + 1 {

|

||||

iter.reportError("ReadInt64", "overflow: " + strconv.FormatUint(uint64(val), 10))

|

||||

if val > int64Max+1 {

|

||||

iter.reportError("ReadInt64", "overflow: "+strconv.FormatUint(uint64(val), 10))

|

||||

return

|

||||

}

|

||||

return -int64(val)

|

||||

} else {

|

||||

val := iter.readUint64(c)

|

||||

if val > int64Max {

|

||||

iter.reportError("ReadInt64", "overflow: " + strconv.FormatUint(uint64(val), 10))

|

||||

iter.reportError("ReadInt64", "overflow: "+strconv.FormatUint(uint64(val), 10))

|

||||

return

|

||||

}

|

||||

return int64(val)

|

||||

@ -229,7 +229,7 @@ func (iter *Iterator) readUint64(c byte) (ret uint64) {

|

||||

return 0 // single zero

|

||||

}

|

||||

if ind == invalidCharForNumber {

|

||||

iter.reportError("readUint64", "unexpected character: " + string([]byte{byte(ind)}))

|

||||

iter.reportError("readUint64", "unexpected character: "+string([]byte{byte(ind)}))

|

||||

return

|

||||

}

|

||||

value := uint64(ind)

|

||||

@ -252,7 +252,7 @@ func (iter *Iterator) readUint64(c byte) (ret uint64) {

|

||||

}

|

||||

value = (value << 3) + (value << 1) + uint64(ind)

|

||||

}

|

||||

if (!iter.loadMore()) {

|

||||

if !iter.loadMore() {

|

||||

return value

|

||||

}

|

||||

}

|

||||

|

||||

@ -1,5 +1,10 @@

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"unicode"

|

||||

)

|

||||

|

||||

func (iter *Iterator) ReadObject() (ret string) {

|

||||

c := iter.nextToken()

|

||||

switch c {

|

||||

@ -22,7 +27,7 @@ func (iter *Iterator) ReadObject() (ret string) {

|

||||

case '}':

|

||||

return "" // end of object

|

||||

default:

|

||||

iter.reportError("ReadObject", `expect { or , or } or n`)

|

||||

iter.reportError("ReadObject", fmt.Sprintf(`expect { or , or } or n, but found %s`, string([]byte{c})))

|

||||

return

|

||||

}

|

||||

}

|

||||

@ -35,11 +40,14 @@ func (iter *Iterator) readFieldHash() int32 {

|

||||

for i := iter.head; i < iter.tail; i++ {

|

||||

// require ascii string and no escape

|

||||

b := iter.buf[i]

|

||||

if 'A' <= b && b <= 'Z' {

|

||||

b += 'a' - 'A'

|

||||

}

|

||||

if b == '"' {

|

||||

iter.head = i+1

|

||||

iter.head = i + 1

|

||||

c = iter.nextToken()

|

||||

if c != ':' {

|

||||

iter.reportError("readFieldHash", `expect :, but found ` + string([]byte{c}))

|

||||

iter.reportError("readFieldHash", `expect :, but found `+string([]byte{c}))

|

||||

}

|

||||

return int32(hash)

|

||||

}

|

||||

@ -52,14 +60,14 @@ func (iter *Iterator) readFieldHash() int32 {

|

||||

}

|

||||

}

|

||||

}

|

||||

iter.reportError("readFieldHash", `expect ", but found ` + string([]byte{c}))

|

||||

iter.reportError("readFieldHash", `expect ", but found `+string([]byte{c}))

|

||||

return 0

|

||||

}

|

||||

|

||||

func calcHash(str string) int32 {

|

||||

hash := int64(0x811c9dc5)

|

||||

for _, b := range str {

|

||||

hash ^= int64(b)

|

||||

hash ^= int64(unicode.ToLower(b))

|

||||

hash *= 0x1000193

|

||||

}

|

||||

return int32(hash)

|

||||

@ -76,7 +84,7 @@ func (iter *Iterator) ReadObjectCB(callback func(*Iterator, string) bool) bool {

|

||||

return false

|

||||

}

|

||||

for iter.nextToken() == ',' {

|

||||

field := string(iter.readObjectFieldAsBytes())

|

||||

field = string(iter.readObjectFieldAsBytes())

|

||||

if !callback(iter, field) {

|

||||

return false

|

||||

}

|

||||

@ -97,6 +105,46 @@ func (iter *Iterator) ReadObjectCB(callback func(*Iterator, string) bool) bool {

|

||||

return false

|

||||

}

|

||||

|

||||

func (iter *Iterator) ReadMapCB(callback func(*Iterator, string) bool) bool {

|

||||

c := iter.nextToken()

|

||||

if c == '{' {

|

||||

c = iter.nextToken()

|

||||

if c == '"' {

|

||||

iter.unreadByte()

|

||||

field := iter.ReadString()

|

||||

if iter.nextToken() != ':' {

|

||||

iter.reportError("ReadMapCB", "expect : after object field")

|

||||

return false

|

||||

}

|

||||

if !callback(iter, field) {

|

||||

return false

|

||||

}

|

||||

for iter.nextToken() == ',' {

|

||||

field = iter.ReadString()

|

||||

if iter.nextToken() != ':' {

|

||||

iter.reportError("ReadMapCB", "expect : after object field")

|

||||

return false

|

||||

}

|

||||

if !callback(iter, field) {

|

||||

return false

|

||||

}

|

||||

}

|

||||

return true

|

||||

}

|

||||

if c == '}' {

|

||||

return true

|

||||

}

|

||||

iter.reportError("ReadMapCB", `expect " after }`)

|

||||

return false

|

||||

}

|

||||

if c == 'n' {

|

||||

iter.skipFixedBytes(3)

|

||||

return true // null

|

||||

}

|

||||

iter.reportError("ReadMapCB", `expect { or n`)

|

||||

return false

|

||||

}

|

||||

|

||||

func (iter *Iterator) readObjectStart() bool {

|

||||

c := iter.nextToken()

|

||||

if c == '{' {

|

||||

@ -106,8 +154,11 @@ func (iter *Iterator) readObjectStart() bool {

|

||||

}

|

||||

iter.unreadByte()

|

||||

return true

|

||||

} else if c == 'n' {

|

||||

iter.skipFixedBytes(3)

|

||||

return false

|

||||

}

|

||||

iter.reportError("readObjectStart", "expect { ")

|

||||

iter.reportError("readObjectStart", "expect { or n")

|

||||

return false

|

||||

}

|

||||

|

||||

|

||||

@ -29,6 +29,15 @@ func (iter *Iterator) ReadBool() (ret bool) {

|

||||

return

|

||||

}

|

||||

|

||||

func (iter *Iterator) SkipAndReturnBytes() []byte {

|

||||

if iter.reader != nil {

|

||||

panic("reader input does not support this api")

|

||||

}

|

||||

before := iter.head

|

||||

iter.Skip()

|

||||

after := iter.head

|

||||

return iter.buf[before:after]

|

||||

}

|

||||

|

||||

// Skip skips a json object and positions to relatively the next json object

|

||||

func (iter *Iterator) Skip() {

|

||||

@ -193,15 +202,15 @@ func (iter *Iterator) skipUntilBreak() {

|

||||

}

|

||||

|

||||

func (iter *Iterator) skipFixedBytes(n int) {

|

||||

iter.head += n;

|

||||

if (iter.head >= iter.tail) {

|

||||

more := iter.head - iter.tail;

|

||||

iter.head += n

|

||||

if iter.head >= iter.tail {

|

||||

more := iter.head - iter.tail

|

||||

if !iter.loadMore() {

|

||||

if more > 0 {

|

||||

iter.reportError("skipFixedBytes", "unexpected end");

|

||||

iter.reportError("skipFixedBytes", "unexpected end")

|

||||

}

|

||||

return

|

||||

}

|

||||

iter.head += more;

|

||||

iter.head += more

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@ -2,64 +2,42 @@ package jsoniter

|

||||

|

||||

import (

|

||||

"unicode/utf16"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

// TODO: avoid append

|

||||

func (iter *Iterator) ReadString() (ret string) {

|

||||

c := iter.nextToken()

|

||||

if c == '"' {

|

||||

copied := make([]byte, 32)

|

||||

j := 0

|

||||

fast_loop:

|

||||

for {

|

||||

i := iter.head

|

||||

for ; i < iter.tail && j < len(copied); i++ {

|

||||

c := iter.buf[i]

|

||||

if c == '"' {

|

||||

iter.head = i + 1

|

||||

copied = copied[:j]

|

||||

return *(*string)(unsafe.Pointer(&copied))

|

||||

} else if c == '\\' {

|

||||

iter.head = i

|

||||

break fast_loop

|

||||

}

|

||||

copied[j] = c

|

||||

j++

|

||||

}

|

||||

if i == iter.tail {

|

||||

if iter.loadMore() {

|

||||

i = iter.head

|

||||

continue

|

||||

} else {

|

||||

iter.reportError("ReadString", "incomplete string")

|

||||

return

|

||||

}

|

||||

}

|

||||

iter.head = i

|

||||

if j == len(copied) {

|

||||

newBuf := make([]byte, len(copied) * 2)

|

||||

copy(newBuf, copied)

|

||||

copied = newBuf

|

||||

for i := iter.head; i < iter.tail; i++ {

|

||||

c := iter.buf[i]

|

||||

if c == '"' {

|

||||

ret = string(iter.buf[iter.head:i])

|

||||

iter.head = i + 1

|

||||

return ret

|

||||

} else if c == '\\' {

|

||||

break

|

||||

}

|

||||

}

|

||||

return iter.readStringSlowPath(copied[:j])

|

||||

return iter.readStringSlowPath()

|

||||

} else if c == 'n' {

|

||||

iter.skipFixedBytes(3)

|

||||

return ""

|

||||

}

|

||||

iter.reportError("ReadString", `expects " or n`)

|

||||

return

|

||||

}

|

||||

|

||||

func (iter *Iterator) readStringSlowPath(str []byte) (ret string) {

|

||||

func (iter *Iterator) readStringSlowPath() (ret string) {

|

||||

var str []byte

|

||||

var c byte

|

||||

for iter.Error == nil {

|

||||

c = iter.readByte()

|

||||

if c == '"' {

|

||||

return *(*string)(unsafe.Pointer(&str))

|

||||

return string(str)

|

||||

}

|

||||

if c == '\\' {

|

||||

c = iter.readByte()

|

||||

switch c {

|

||||

case 'u':

|

||||

case 'u', 'U':

|

||||

r := iter.readU4()

|

||||

if utf16.IsSurrogate(r) {

|

||||

c = iter.readByte()

|

||||

@ -75,7 +53,7 @@ func (iter *Iterator) readStringSlowPath(str []byte) (ret string) {

|

||||

if iter.Error != nil {

|

||||

return

|

||||

}

|

||||

if c != 'u' {

|

||||

if c != 'u' && c != 'U' {

|

||||

iter.reportError("ReadString",

|

||||

`expects \u after utf16 surrogate, but \u not found`)

|

||||

return

|

||||

@ -114,6 +92,7 @@ func (iter *Iterator) readStringSlowPath(str []byte) (ret string) {

|

||||

str = append(str, c)

|

||||

}

|

||||

}

|

||||

iter.reportError("ReadString", "unexpected end of input")

|

||||

return

|

||||

}

|

||||

|

||||

@ -125,13 +104,13 @@ func (iter *Iterator) ReadStringAsSlice() (ret []byte) {

|

||||

// for: field name, base64, number

|

||||

if iter.buf[i] == '"' {

|

||||

// fast path: reuse the underlying buffer

|

||||

ret = iter.buf[iter.head : i]

|

||||

ret = iter.buf[iter.head:i]

|

||||

iter.head = i + 1

|

||||

return ret

|

||||

}

|

||||

}

|

||||

readLen := iter.tail - iter.head

|

||||

copied := make([]byte, readLen, readLen * 2)

|

||||

copied := make([]byte, readLen, readLen*2)

|

||||

copy(copied, iter.buf[iter.head:iter.tail])

|

||||

iter.head = iter.tail

|

||||

for iter.Error == nil {

|

||||

@ -154,9 +133,11 @@ func (iter *Iterator) readU4() (ret rune) {

|

||||

return

|

||||

}

|

||||

if c >= '0' && c <= '9' {

|

||||

ret = ret * 16 + rune(c - '0')

|

||||

ret = ret*16 + rune(c-'0')

|

||||

} else if c >= 'a' && c <= 'f' {

|

||||

ret = ret * 16 + rune(c - 'a' + 10)

|

||||

ret = ret*16 + rune(c-'a'+10)

|

||||

} else if c >= 'A' && c <= 'F' {

|

||||

ret = ret*16 + rune(c-'A'+10)

|

||||

} else {

|

||||

iter.reportError("readU4", "expects 0~9 or a~f")

|

||||

return

|

||||

@ -178,14 +159,14 @@ const (

|

||||

mask3 = 0x0F // 0000 1111

|

||||

mask4 = 0x07 // 0000 0111

|

||||

|

||||

rune1Max = 1 << 7 - 1

|

||||

rune2Max = 1 << 11 - 1

|

||||

rune3Max = 1 << 16 - 1

|

||||

rune1Max = 1<<7 - 1

|

||||

rune2Max = 1<<11 - 1

|

||||

rune3Max = 1<<16 - 1

|

||||

|

||||

surrogateMin = 0xD800

|

||||

surrogateMax = 0xDFFF

|

||||

|

||||

maxRune = '\U0010FFFF' // Maximum valid Unicode code point.

|

||||

maxRune = '\U0010FFFF' // Maximum valid Unicode code point.

|

||||

runeError = '\uFFFD' // the "error" Rune or "Unicode replacement character"

|

||||

)

|

||||

|

||||

@ -196,22 +177,22 @@ func appendRune(p []byte, r rune) []byte {

|

||||

p = append(p, byte(r))

|

||||

return p

|

||||

case i <= rune2Max:

|

||||

p = append(p, t2 | byte(r >> 6))

|

||||

p = append(p, tx | byte(r) & maskx)

|

||||

p = append(p, t2|byte(r>>6))

|

||||

p = append(p, tx|byte(r)&maskx)

|

||||

return p

|

||||

case i > maxRune, surrogateMin <= i && i <= surrogateMax:

|

||||

r = runeError

|

||||

fallthrough

|

||||

case i <= rune3Max:

|

||||

p = append(p, t3 | byte(r >> 12))

|

||||

p = append(p, tx | byte(r >> 6) & maskx)

|

||||

p = append(p, tx | byte(r) & maskx)

|

||||

p = append(p, t3|byte(r>>12))

|

||||

p = append(p, tx|byte(r>>6)&maskx)

|

||||

p = append(p, tx|byte(r)&maskx)

|

||||

return p

|

||||

default:

|

||||

p = append(p, t4 | byte(r >> 18))

|

||||

p = append(p, tx | byte(r >> 12) & maskx)

|

||||

p = append(p, tx | byte(r >> 6) & maskx)

|

||||

p = append(p, tx | byte(r) & maskx)

|

||||

p = append(p, t4|byte(r>>18))

|

||||

p = append(p, tx|byte(r>>12)&maskx)

|

||||

p = append(p, tx|byte(r>>6)&maskx)

|

||||

p = append(p, tx|byte(r)&maskx)

|

||||

return p

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@ -1,32 +1,38 @@

|

||||

package jsoniter

|

||||

|

||||

import (

|

||||

"encoding"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"reflect"

|

||||

"sync/atomic"

|

||||

"unsafe"

|

||||

"errors"

|

||||

)

|

||||

|

||||

/*

|

||||

Reflection on type to create decoders, which is then cached

|

||||

Reflection on value is avoided as we can, as the reflect.Value itself will allocate, with following exceptions

|

||||

1. create instance of new value, for example *int will need a int to be allocated

|

||||

2. append to slice, if the existing cap is not enough, allocate will be done using Reflect.New

|

||||

3. assignment to map, both key and value will be reflect.Value

|

||||

For a simple struct binding, it will be reflect.Value free and allocation free

|

||||

*/

|

||||

|

||||

// Decoder is an internal type registered to cache as needed.

|

||||

// Don't confuse jsoniter.Decoder with json.Decoder.

|

||||

// For json.Decoder's adapter, refer to jsoniter.AdapterDecoder(todo link).

|

||||

//

|

||||

// Reflection on type to create decoders, which is then cached

|

||||

// Reflection on value is avoided as we can, as the reflect.Value itself will allocate, with following exceptions

|

||||

// 1. create instance of new value, for example *int will need a int to be allocated

|

||||

// 2. append to slice, if the existing cap is not enough, allocate will be done using Reflect.New

|

||||

// 3. assignment to map, both key and value will be reflect.Value

|

||||

// For a simple struct binding, it will be reflect.Value free and allocation free

|

||||

type Decoder interface {

|

||||

decode(ptr unsafe.Pointer, iter *Iterator)

|

||||

}

|

||||

|

||||

// Encoder is an internal type registered to cache as needed.

|

||||

// Don't confuse jsoniter.Encoder with json.Encoder.

|

||||

// For json.Encoder's adapter, refer to jsoniter.AdapterEncoder(todo godoc link).

|

||||

type Encoder interface {

|

||||

isEmpty(ptr unsafe.Pointer) bool

|

||||

encode(ptr unsafe.Pointer, stream *Stream)

|

||||

encodeInterface(val interface{}, stream *Stream)

|

||||

}

|

||||

|

||||

func WriteToStream(val interface{}, stream *Stream, encoder Encoder) {

|

||||

func writeToStream(val interface{}, stream *Stream, encoder Encoder) {

|

||||

e := (*emptyInterface)(unsafe.Pointer(&val))

|

||||

if reflect.TypeOf(val).Kind() == reflect.Ptr {

|

||||

encoder.encode(unsafe.Pointer(&e.word), stream)

|

||||

@ -37,7 +43,7 @@ func WriteToStream(val interface{}, stream *Stream, encoder Encoder) {

|

||||

|

||||

type DecoderFunc func(ptr unsafe.Pointer, iter *Iterator)

|

||||

type EncoderFunc func(ptr unsafe.Pointer, stream *Stream)

|

||||

type ExtensionFunc func(typ reflect.Type, field *reflect.StructField) ([]string, DecoderFunc)

|

||||

type ExtensionFunc func(typ reflect.Type, field *reflect.StructField) ([]string, EncoderFunc, DecoderFunc)

|

||||

|

||||

type funcDecoder struct {

|

||||

fun DecoderFunc

|

||||

@ -56,7 +62,11 @@ func (encoder *funcEncoder) encode(ptr unsafe.Pointer, stream *Stream) {

|

||||

}

|

||||

|

||||

func (encoder *funcEncoder) encodeInterface(val interface{}, stream *Stream) {

|

||||

WriteToStream(val, stream, encoder)

|

||||

writeToStream(val, stream, encoder)

|

||||

}

|

||||

|

||||

func (encoder *funcEncoder) isEmpty(ptr unsafe.Pointer) bool {

|

||||

return false

|

||||

}

|

||||

|

||||

var DECODERS unsafe.Pointer

|

||||

@ -67,7 +77,12 @@ var fieldDecoders map[string]Decoder

|

||||

var typeEncoders map[string]Encoder

|

||||

var fieldEncoders map[string]Encoder

|

||||

var extensions []ExtensionFunc

|

||||

var jsonNumberType reflect.Type

|

||||

var jsonRawMessageType reflect.Type

|

||||

var anyType reflect.Type

|

||||

var marshalerType reflect.Type

|

||||

var unmarshalerType reflect.Type

|

||||

var textUnmarshalerType reflect.Type

|

||||

|

||||

func init() {

|

||||

typeDecoders = map[string]Decoder{}

|

||||

@ -77,7 +92,12 @@ func init() {

|

||||

extensions = []ExtensionFunc{}

|

||||

atomic.StorePointer(&DECODERS, unsafe.Pointer(&map[string]Decoder{}))

|

||||

atomic.StorePointer(&ENCODERS, unsafe.Pointer(&map[string]Encoder{}))

|

||||

jsonNumberType = reflect.TypeOf((*json.Number)(nil)).Elem()

|

||||

jsonRawMessageType = reflect.TypeOf((*json.RawMessage)(nil)).Elem()

|

||||

anyType = reflect.TypeOf((*Any)(nil)).Elem()

|

||||

marshalerType = reflect.TypeOf((*json.Marshaler)(nil)).Elem()

|

||||

unmarshalerType = reflect.TypeOf((*json.Unmarshaler)(nil)).Elem()

|

||||

textUnmarshalerType = reflect.TypeOf((*encoding.TextUnmarshaler)(nil)).Elem()

|

||||

}

|

||||

|

||||

func addDecoderToCache(cacheKey reflect.Type, decoder Decoder) {

|

||||

@ -143,10 +163,18 @@ func RegisterExtension(extension ExtensionFunc) {

|

||||

extensions = append(extensions, extension)

|

||||

}

|

||||

|

||||

// CleanDecoders cleans decoders registered

|

||||

// CleanDecoders cleans decoders registered or cached

|

||||

func CleanDecoders() {

|

||||

typeDecoders = map[string]Decoder{}

|

||||

fieldDecoders = map[string]Decoder{}

|

||||

atomic.StorePointer(&DECODERS, unsafe.Pointer(&map[string]Decoder{}))

|

||||

}

|

||||

|

||||

// CleanEncoders cleans encoders registered or cached

|

||||

func CleanEncoders() {

|

||||

typeEncoders = map[string]Encoder{}

|

||||

fieldEncoders = map[string]Encoder{}

|

||||

atomic.StorePointer(&ENCODERS, unsafe.Pointer(&map[string]Encoder{}))

|

||||

}

|

||||

|

||||

type optionalDecoder struct {

|

||||

@ -171,7 +199,6 @@ func (decoder *optionalDecoder) decode(ptr unsafe.Pointer, iter *Iterator) {

|

||||

}

|

||||

|

||||

type optionalEncoder struct {

|

||||

valueType reflect.Type

|

||||

valueEncoder Encoder

|

||||

}

|

||||

|

||||

@ -184,92 +211,58 @@ func (encoder *optionalEncoder) encode(ptr unsafe.Pointer, stream *Stream) {

|

||||

}

|

||||

|

||||

func (encoder *optionalEncoder) encodeInterface(val interface{}, stream *Stream) {

|

||||

WriteToStream(val, stream, encoder)

|

||||

writeToStream(val, stream, encoder)

|

||||

}

|

||||

|

||||

type mapDecoder struct {

|

||||

mapType reflect.Type

|

||||

elemType reflect.Type

|

||||

elemDecoder Decoder

|

||||

mapInterface emptyInterface

|

||||

}

|

||||

|

||||

func (decoder *mapDecoder) decode(ptr unsafe.Pointer, iter *Iterator) {

|

||||

// dark magic to cast unsafe.Pointer back to interface{} using reflect.Type

|

||||

mapInterface := decoder.mapInterface

|

||||

mapInterface.word = ptr

|

||||

realInterface := (*interface{})(unsafe.Pointer(&mapInterface))

|

||||

realVal := reflect.ValueOf(*realInterface).Elem()

|

||||

|

||||

for field := iter.ReadObject(); field != ""; field = iter.ReadObject() {

|

||||

elem := reflect.New(decoder.elemType)

|

||||

decoder.elemDecoder.decode(unsafe.Pointer(elem.Pointer()), iter)

|

||||

// to put into map, we have to use reflection

|

||||